As a bonus, you'll be able to recover old versions. If the files don't need to be directly readable from the backup, but you want to be able to update the backup, and you have some spare space on the disk, you can use Git to archive the data. If the files don't need to be readable or modifiable directly, just restored as a whole, a simple solution is to archive them with tar or the like. ls -l has a lot more data that would get in the way

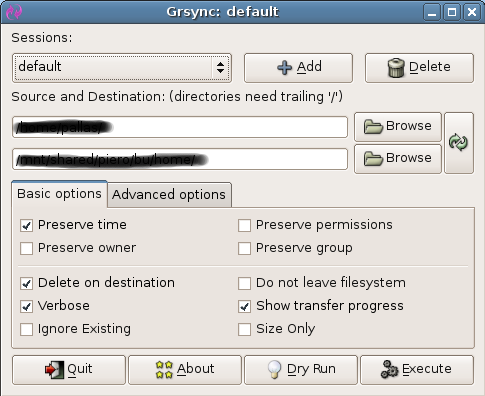

GRSYNC NON REGULAR FILES HOW TO

I'd have to manually extract the symlink data from stdio and I'm not sure how to do that.

Rsync everything but the symlinks, then seperately run a 'find' operation to identify all symlinks in the source, and manually create them with a bash script. I see that rsync can not do such a thing. I would prefer if the solution was something built into rsync or done in bash pipeline, or at least part of the basic tools found on any linux, but I'm willing to accept an other software that offers what rsync does + this functionnality sites-available/nfĪnd generate a regular file like /etc/apache2/sites-enabled/-mungedĬontaining the text. Is there a way that rsync could read a symlink such as this: /etc/apache2/sites-enabled/nf ->. Because the file already has a lot of data on it, I'd like to avoid having to transfer that data in order to format it to something that does support symlinks. I'd like to be able to copy munged symlinks but the drive is exfat and symlinks are not supported. I need to copy a large directory containing all kinds of files onto a drive that already has a lot of important data.